Artificial Intelligence is transforming the world in ways we could not have imagined even a decade ago. From automating routine tasks to accelerating decision-making, AI has made everyday life more efficient and has contributed positively across critical sectors such as healthcare, agriculture, government, education, and beyond.

At the same time, this rapid transformation has sparked legitimate concerns—around data privacy, job displacement, and ethical dilemmas. These concerns deserve attention, not fear. AI is a useful servant, not a dangerous master. The future of AI depends not on the technology itself, but on how consciously humans choose to adopt and govern it.

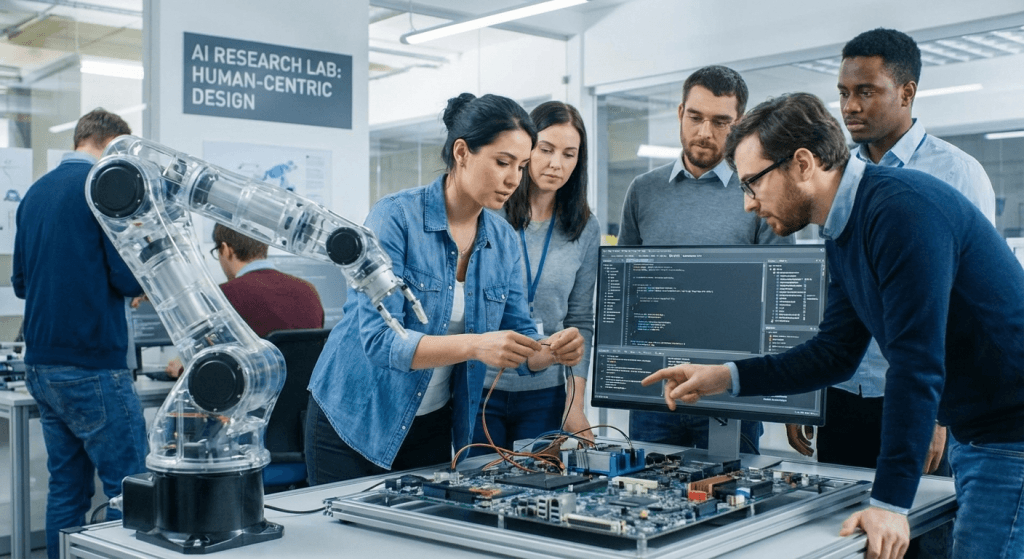

AI did not emerge on its own. It is invented, designed, trained, and constrained by humans. Every algorithm reflects human intent—what problems to solve, what data to use, and what limits to enforce.

AI has no emotions, moral reasoning, or independent consciousness. It does not possess intent; it executes instructions within predefined parameters. Because of this, AI cannot become a threat unless humans allow misuse or abdicate responsibility.

A tool can never surpass the accountability of the person who controls it.

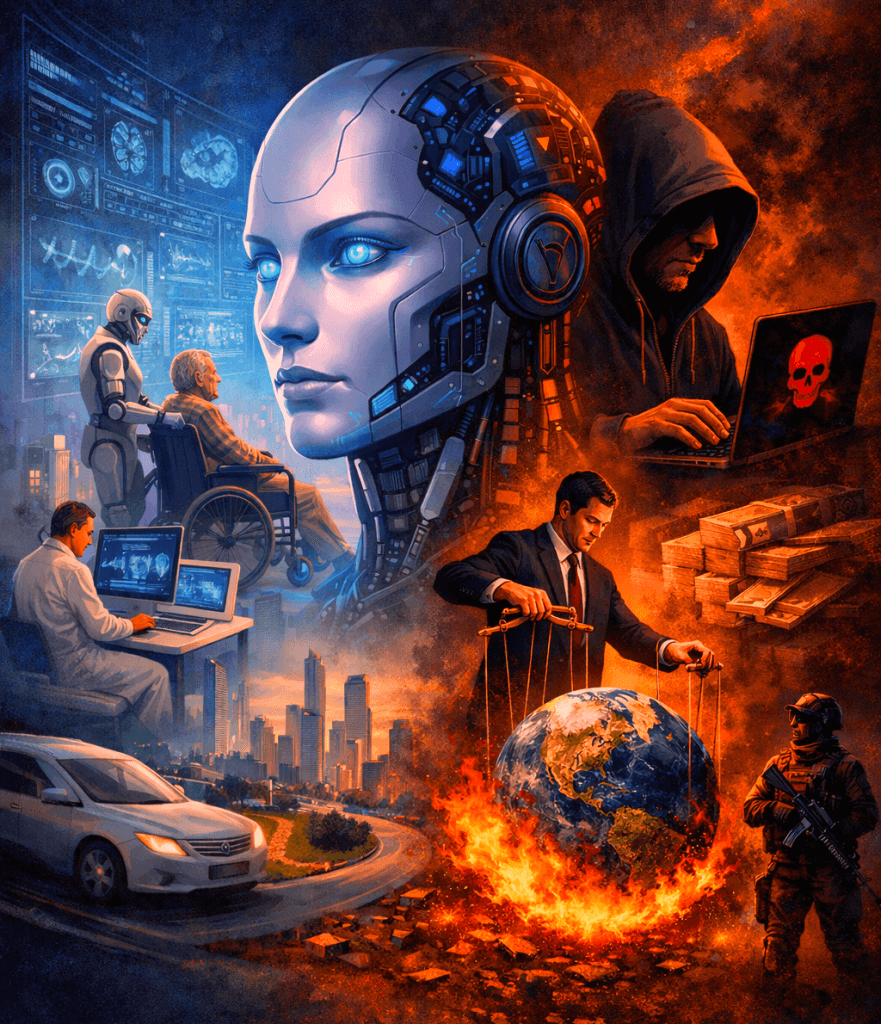

There is no denying AI’s positive influence. In healthcare, it improves diagnostics and patient outcomes. In agriculture, it enables precision farming and better resource management. In education, it personalizes learning. In government, it enhances efficiency and service delivery.

However, alongside these advancements come concerns about surveillance, data misuse, and workforce disruption. These challenges are not signs that AI is dangerous—they are signals that human oversight, regulation, and ethical judgment must evolve alongside technology.

Progress without responsibility creates risk. Progress guided by ethics creates trust.

AI, like any powerful technology, is neither good nor bad by nature. The risk lies in how it is applied.

When AI is used without transparency, safeguards, or ethical frameworks, problems arise. Privacy erosion, misinformation, and over-reliance on automation are consequences of human decisions—not machine intent.

Responsible AI adoption requires:

Fear does not solve these challenges. Governance and awareness do.

A human-centric approach to AI adoption is essential. No system—no matter how advanced—can replace human touch, human intuition, or human empathy. These qualities form the foundation of trust, connection, and meaningful relationships.

AI can assist, enhance, and support—but it cannot feel, care, or ethically reason on its own. Human creativity, values, and emotional intelligence remain irreplaceable. When individuals and organizations recognize this, AI becomes a partner rather than a replacement.

Fearoften stems from misunderstanding. Awareness builds confidence.

By educating ourselves about how AI works, where its limits lie, and how it should be used responsibly, we remove unnecessary fear from the conversation. Ethical frameworks, informed users, and clear accountability ensure AI remains aligned with human interests.

When humans stay informed, ethical, and alert, AI functions exactly as it was intended to: as a powerful assistant that improves lives and outcomes.

AI will never become a dangerous master as long as humans remain responsible leaders.

We created AI to serve humanity—not to control it. With a judicious, responsible, and ethical approach, AI can continue to enhance productivity, innovation, and social progress while preserving what matters most: human connection and values.

We look forward to connecting with you and discussing how we can help you achieve your digital goals.

Copyright © 2024 Raji Imagery®. All rights reserved.

By using our site, you agree to our use of cookies.

Websites store cookies to enhance functionality and personalise your experience. You can manage your preferences, but blocking some cookies may impact site performance and services.

Essential cookies enable basic functions and are necessary for the proper function of the website.

These cookies are needed for adding comments on this website.